|

|

- Search

| Int Neurourol J > Volume 26(1); 2022 > Article |

|

ABSTRACT

Purpose

This paper proposes a technological system that uses artificial intelligence to recognize and guide the operator to the exact stenosis area during endoscopic surgery in patients with urethral or ureteral strictures. The aim of this technological solution was to increase surgical efficiency.

Methods

The proposed system utilizes the ResNet-50 algorithm, an artificial intelligence technology, and analyzes images entering the endoscope during surgery to detect the stenosis location accurately and provide intraoperative clinical assistance. The ResNet-50 algorithm was chosen to facilitate accurate detection of the stenosis site.

Results

The high recognition accuracy of the system was confirmed by an average final sensitivity value of 0.96. Since sensitivity is a measure of the probability of a true-positive test, this finding confirms that the system provided accurate guidance to the stenosis area when used for support in actual surgery.

Conclusions

The proposed method supports surgery for patients with urethral or ureteral strictures by applying the ResNet-50 algorithm. The system analyzes images entering the endoscope during surgery and accurately detects stenosis, thereby assisting in surgery. In future research, we intend to provide both conservative and flexible boundaries of the strictures.

A urethral or ureteral stricture [1-8] refers to a narrowing or contraction of the diameter of the urethra or ureter, which transport urine to the bladder. Urethral and ureteral strictures can either be congenital or acquired. They can be caused by incomplete ureteral muscle formation, damage to the ureter due to trauma or surgery, or thickening of the ureter wall due to infection of the ureteral endometrium, and stenosis can also result from urinary stones, malignant tumors, and prostate hypertrophy. The most common symptom is abdominal or side pain, and strictures can cause urinary tract infections due to stagnant urine, leading to fever, pain when urinating, and hematuria. Early detection and treatment can lead to long-term resolution without major problems in daily life, but if a stricture persists, the risk of urinary tract infection, urinary tract stones, hematuria, and renal function decline may increase. Therefore, surgery is necessary as a fundamental curative treatment.

The surgical method usually involves an endoscopic incision of a narrowed ureter with a urethral or ureteral stricture, or an operation to remove the narrowed area and connect the ends of a healthy ureter. In the past, laparotomy was predominantly performed, but in more recent years, endoscopy and laparoscopic surgery have been most frequently performed. However, there remain methodological issues to solve in endoscopic and laparoscopic surgery. In particular, only the area of stenosis should be removed; if resection is not limited to the stenosis area, damage to the normal area (bleeding, nerve damage, etc.) can occur, with potentially fatal consequences. Therefore, it is of pivotal importance to limit surgery to only the area of stenosis, and for this purpose, advanced information technology frameworks, such as artificial intelligence, can be considered.

With this in mind, this paper proposes a technological system that uses artificial intelligence to recognize and guide the operator to the exact stenosis area during endoscopic surgery in patients with urethral or ureteral strictures. This technological solution was developed with the goal of increasing surgical efficiency.

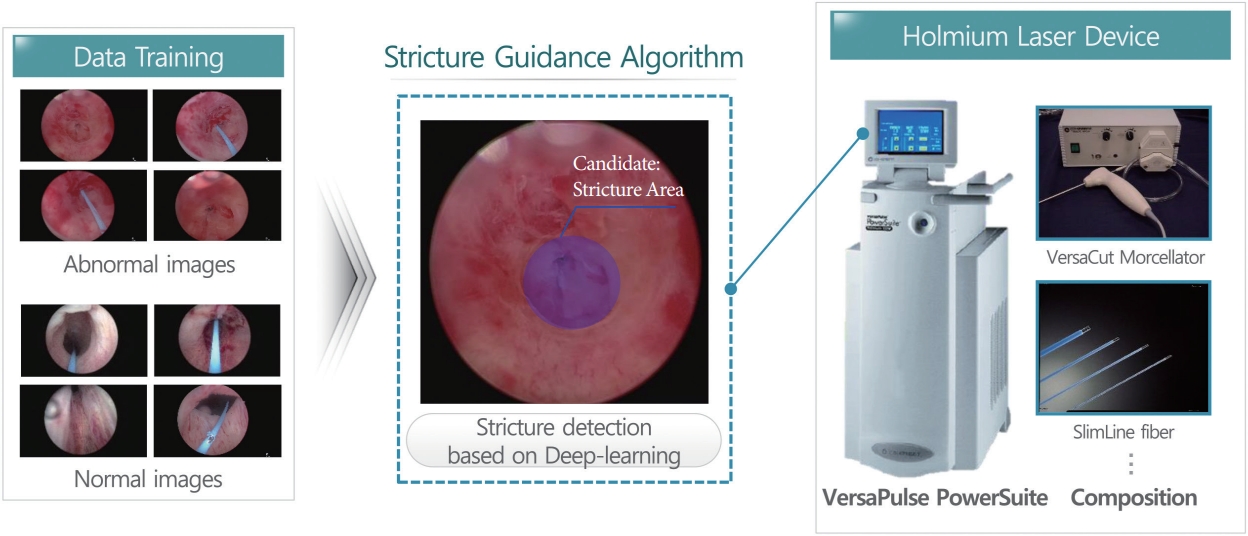

This paper proposes a technological system that supports surgery for patients with urethral or ureteral strictures by applying the ResNet-50 algorithm [9-13], an artificial intelligence technology. The developed system analyzes images entering the endoscope during surgery to detect the stenosis area accurately and provide intraoperative clinical assistance. The ResNet-50 algorithm was chosen to facilitate more accurate detection of the stenosis site. Fig. 1 presents a conceptual diagram of the proposed method.

Numerous studies in the medical field have applied image recognition, voice classification, recognition, and object detection through deep learning, which has demonstrated good performance in the machine learning field due to advances in highperformance hardware (e.g., GPUs) and big data. Among them, convolutional neural networks (CNNs) [14], which are widely used in the field of image recognition, are deep learning models that have been developed to overcome overfitting, local optimal convergence, and gradient extinction by modifying the structure of the existing artificial neural networks. Lecun et al. [15] constructed a model that took 32×32 black-and-white images with handwritten text as input with the goal of recognizing cursive writing and generated 10 numbers as outputs, but this model did not receive much attention due to long-term learning problems. The speed of CNNs has improved significantly due to the development of high-performance hardware and big data. It is easy to collect the data necessary for supervised and unsupervised learning, with representative picture sharing services including ImageNet [16], Flickr [17], and the INRIA Person dataset [18]. Introducing the algorithm presented by Zeiler and Fergus [19] for the existing overfitting problem has solved many problems and yielded excellent performance in object classification and detection. CNNs are better than conventional object detection and learning methods at automatically detecting shapes from input images, and they have the advantage of extracting and learning shapes from one structure.

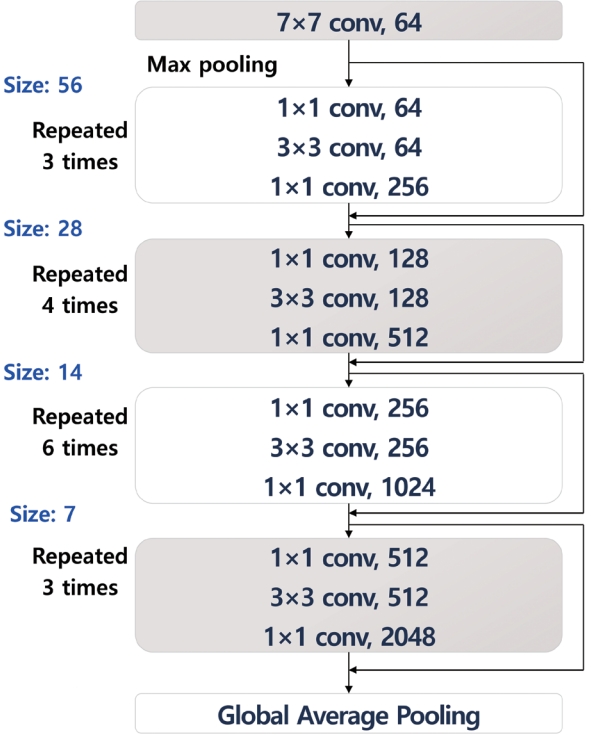

The method proposed in this paper is intended to provide guidance in surgery for urethral and ureteral stenosis. Endoscopic images are preprocessed into an appropriate form for application of the algorithm and labeled as normal or abnormal. The settings for the input of neural network node ends are processed. Thereafter, ResNet-50 is applied to provide guidance for identifying urethral and ureteral strictures. The process of the algorithm is shown in Fig. 2.

Compared with the traditional object division and identification method, a system using deep convolutional neural network (DCNN) called AlexNet [20] showed remarkable recognition performance, which prompted further studies on deep neural networks. The reason for this high level of performance is that the system can extract low-, medium-, and high-level features by repetitively iterating the basic structure of a CNN, since increasing the depth and width of a CNN is expected to improve its recognition performance. However, as a neural network’s depth and width increase, it becomes necessary to solve the problem of an increased number of parameters and number of operations for learning. GoogleNet [21] introduced the inception module, in which a large neural network is connected sparsely to reduce the number of parameters to be learned, thereby allowing the neural network to be deeper. In the development of ResNet, it was found that increasing the DCNN’s model depth led to increased errors in the learning process, prompting the researchers to introduce shortcut connections to minimize these learning errors.

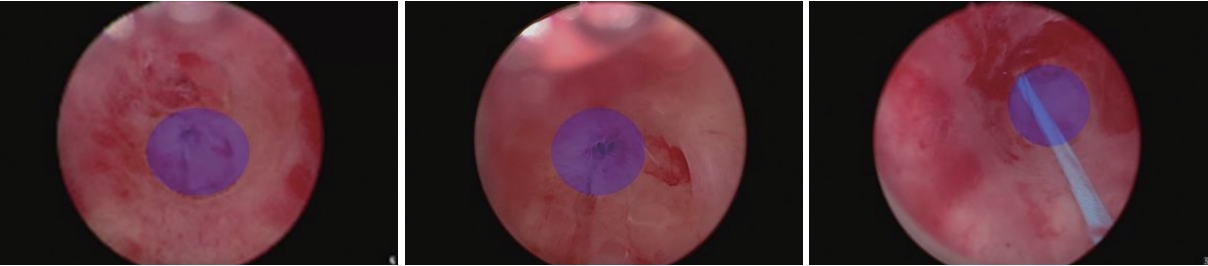

DCNNs model has the advantage of being able to solve the vanishing gradient problem, which leads to back-propagation in the learning process, because it does not significantly change the structure of existing network, and the number of parameters to be learned therefore does not increase even if the DCNN’s depth increases. Because of this, partial learning is conducted in each residual block consisting of 2 to 3 layers, instead of optimizing weights over the whole neural network [22]. The ResNet50 model is a deep learning method that contains 50 convolution layers, with the intention of enhancing stenosis detection by learning with the ImageNet weights obtained using data from over 3 million real-life images. The ResNet-50 model resizes images to dimensions of 512×512, processes images in real time, performs image preprocessing to reconstruct the scope of pixels (-128 to +128), and transforms images (enlargement, reduction, rotation, and shifting of images) at the time of learning in order to adapt to various changes. The learning parameters were as follows: epoch number, 100; batch size, 12; learning rate: 0.001–0.00001; anchor size, 32, 64, 128, 256, or 512; anchor ratios, 0.5, 1, or 2; and anchor scales, 1, 21/3, or 22/3). Fig. 3 shows the structure of ResNet-50 that was applied for actual detection. Fig. 4 shows a sample result of applying the ResNet-50 algorithm.

In order to evaluate the performance of the proposed method, learning was performed using 150 control videos and endoscopic videos from 150 urethral and ureteral stricture patients. During learning, regions-of-interest were labeled for ResNet-50 to identify the stenosis areas in patient data, and the performance of the model was evaluated by comparing the clinical results as ground truth with the model-based guidance.

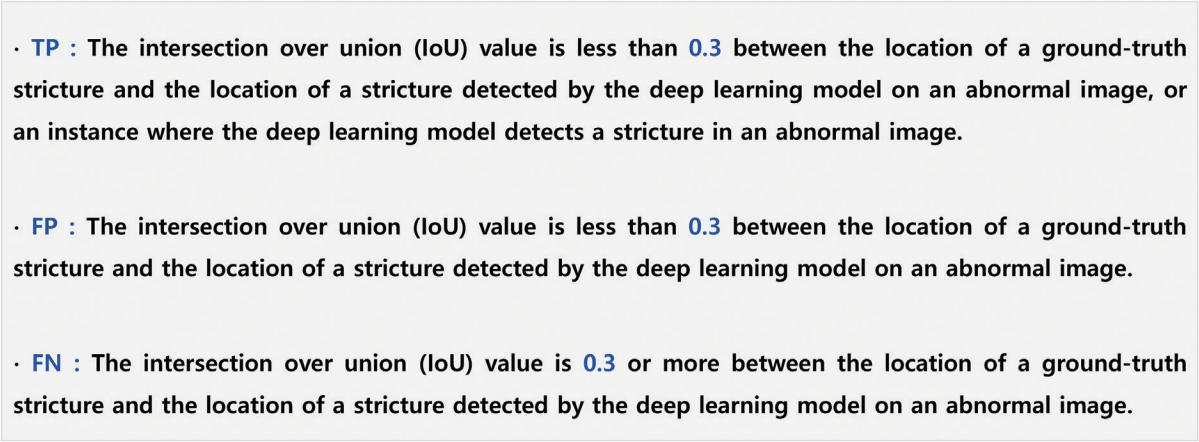

The learning data were classified as normal or abnormal and displayed as sample data to train the model. True positive (TP), false negative (FN), and false positive (FP) were calculated by performing 10-fold cross-validation only with the collected still images. Fig. 5 presents definitions of the concepts used in a confusion matrix.

The intersection over union calculation and the concept of cross-validation can be explained as shown in Fig. 6, and sensitivity was calculated using the numbers of TP, FP, and FN results. The details are shown in Table 1. The final value of sensitivity, which is calculated using TP and FN and is a measure of the probability of TP results, showed high recognition accuracy, with an average value of 0.96. This finding confirmed that accurate guidance to the area of stenosis was possible when the developed platform was used to support actual surgery. In cross-validation, using a total of 10 sets, 9 learning sets were tested 10 times, and overall, high sensitivity was calculated for 10 sets, thus proving the model’s effectiveness. However, some FP results were derived from normal images, showing that the clinical support provided by this system could not fully replace the clinician’s judgment.

Numerous FP results were detected when the foreign object portion or boundary and grayscale information were very ambiguous. In order to reduce the number of FP results, it seems that further research is needed to improve weight optimization. Since this system is a guide to anatomical structures, FP results may not be of vital real-world importance, but it would be preferable to avoid inaccurate boundary information when supporting surgery in order to minimize unnecessary guidance. Fig. 3. Considering these points, it is necessary to various optimization measures that can reduce the number of FP results. Nonetheless, the high sensitivity of the proposed method indicates its accuracy and effectiveness.

Various improvement methods can be considered to reduce FP. In order to support the surgical guide in real time, frame processing criteria can be considered. When processed based on 30 frames, the criteria are set for determining how many images are considered to determine whether it is a stenosis site of stenosis. In general, it is considered a way to reduce FP if it is determined that 4 images are continuously stenosis areas. Another improvement method is to compare the image of the previous frame and the image of the postframe to find the stenosis site continuously, and if a completely different result value is in the middle, it is determined to be the wrong result. This is a method of determining that it is an incorrect case when other specific values are obtained from the continuity of the frame. It is also necessary to analyze a frame-based image that considers front and rear images and a series of continuous image information together without determining that it is a stenosis area by processing only one sheet. Of course, if it proceeds based on such frame processing, it seems that upgrading hardware parts such as GPUs should also proceed at the same time.

Finally, the specifications for the endoscopic laser equipment used for performance evaluation are shown in Table 2 below. The equipment was a standard 100-W holmium laser, and the laser classification, preparation, and input power are described.

This paper proposes a technological system that guides the clinician to the exact stenosis area using artificial intelligence during endoscopic surgery in patients with urethral or ureteral strictures. This system, which uses the ResNet-50 algorithm, was developed with the goal of increasing surgical efficiency. The system analyzes images entering the endoscope during surgery to detect stenosis accurately and thereby provide clinical assistance in surgery. The ResNet-50 algorithm was chosen to improve the accuracy of detection.

High recognition accuracy was confirmed by an average sensitivity value of 0.96. Sensitivity, which is calculated based on the number of TP and FN results, is a measure of how likely positive results are to be reported when the condition in question is truly present. Numerous FP results were derived when the foreign object portion or boundary and grayscale information were very ambiguous. Therefore, further research on weight optimization is needed to reduce the FP rate. Although FP results may not be likely to cause confusion in real-world clinical applications, it would still be preferable to reduce unnecessary guidance.

In future research, we intend to provide boundary information in 2 forms: conservative boundaries and flexible boundaries. The boundary information in the present study could be described as flexible, whereas conservative boundary information will reduce patient bleeding. The plan is to develop a method of conservative boundary determination by limiting boundary extraction through the minimization of invariant values, such as moment values, as well as adjusting threshold values. This future step aims to bring about benefits in terms of the effects of surgery on the patient.

In addition, the proposed surgical guide technology can also be applied to the field of robotic systems [23] that can be used in endoscopic and laparoscopic surgery. In particular, the industry is developing a system using robots to treat ureter and kidney stones, which can be applied as a key technology to guide treatment areas during surgery by fusion with robots in real time. Usually, the core functions of robot systems include precise control, force detection, stone size measurement, automatic endoscopic extraction and insertion, safety protocols, and radiation exposure protection. In addition, it is considered that the speed control and guide of the endoscope are also helpful techniques. It is thought that it is meaningful not only to detect the area but also to provide additional information that can help the actual surgery, such as whether the actual surgery progresses quickly or if there is a missing stenosis. If a technology that automatically detects surgical sites such as stones using artificial intelligence is applied to these functions, the added value is expected to be even higher. Through the base technology of this paper, it is intended to be used in various surgical fields.

NOTES

Funding Support

This work was supported by the research fund of Chungnam National University Hospital.

Research Ethics

This research was approved by the Institutional Review Board of Chungnam National University Hospital (approval number: CNUIRB2022-018).

REFERENCES

1. Konstantinos-Vaios M, Athanasios O, Ioannis S, Marina K, George M, Evangelia N, et al. Defining voiding dysfunction in women: bladder outflow obstruction versus detrusor underactivity. Int Neurourol J 2021;25:244-51. PMID: 33957716

2. Yu J, Jeong BC, Jeon SS, Lee SW, Lee KS. Comparison of efficacy of different surgical techniques for benign prostatic obstruction. Int Neurourol J 2021;25:252-62. PMID: 33957718

3. Mytilekas KV, Oeconomou A, Sokolakis I, Kalaitzi M, Mouzakitis G, Nakopoulou E, et al. Defining voiding dysfunction in women: bladder outflow obstruction versus detrusor underactivity. Int Neurourol J 2021;25:244-51. PMID: 33957716

4. Kim HW, Lee JZ, Shin DG. Pathophysiology and management of long-term complications after transvaginal urethral diverticulectomy. Int Neurourol J 2021;25:202-9. PMID: 34610713

5. Jang EB, Hong SH, Kim KS, Park SY, Kim YT, Yoon YE, et al. Catheter-related bladder discomfort: how can we manage it? Int Neurourol J 2020;24:324-31. PMID: 33401353

6. Kwon WA, Lee SY, Jeong TY, Moon HS. Lower urinary tract symptoms in prostate cancer patients treated with radiation therapy: past and present. Int Neurourol J 2021;25:119-27. PMID: 33504132

7. Baser A, Zumrutbas AE, Ozlulerden Y, Alkıs O, Oztekın A, Celen S, et al. Is there a correlation between behçet disease and lower urinary tract symptoms? Int Neurourol J 2020;24:150-5. PMID: 32615677

8. Kim SJ, Choo HJ, Yoon H. Diagnostic value of the maximum urethral closing pressure in women with overactive bladder symptoms and functional bladder outlet obstruction. Int Neurourol J 2022;26(Suppl 1):S1-7. PMID: 35236047

9. Wen L, Li X, Gao L. A transfer convolutional neural network for fault diagnosis based on ResNet-50. Neural Comput Appl 2020;32:6111-24.

10. Kim JW, Kim SJ, Park JM, Na YG, Kim KH. Past, present, and future in the study of neural control of the lower urinary tract. Int Neurourol J 2020;24:191-9. PMID: 33017890

11. Koonce B. Convolutional neural networks with swift for tensorflow. Berkeley (CA): Apress; 2021.

12. Quach LD, Quoc NP, Thi NH, Tran DC, Hassan MF. Using surf to improve resnet-50 model for poultry disease recognition algorithm. 2020 International Conference on Computational Intelligence (ICCI). 2020 Dec 12-13; IEEE; 2020. 317-21.

13. Miranda ND, Novamizanti L, Rizal S. Convolutional Neural Network pada klasifikasi sidik jari menggunakan RESNET-50. J Tek Inform (Jutif) 2020;1:61-8.

14. Esteva A, Kuprel B, Novoa RA, Ko J, Swetter SM, Blau HM, et al. Dermatologist-level classification of skin cancer with deep neural networks. Nature 2017;542:115-8. PMID: 28117445

15. Lecun Y, Bottou L, Bengio Y, Haffner P. Gradient-based learning applied to document recognition. Proc IEEE 1998;86:2278-324.

16. ImageNet [Internet]. Stanford Vision Lab, Stanford University, Princeton University; c2022 [cited 2022 Jan 20]. Available from: http://image-net.org/.

17. Flickr [Internet]. Flickr; c2022 [cited 2022 Jan 15]. Available from: https://www.flickr.com/.

18. INRIA Person dataset [Internet]. c2022 [cited 2022 Jan 12]. Available from: http://pascal.inrialpes.fr/data/human/.

19. Zeiler MD, Fergus R. Visualizing and understanding convolutional networks. In: Fleet D, Pajdla T, Schiele B, Tuytelaars T, editors. Computer vision–ECCV 2014. ECCV 2014. Lecture Notes in computer science, vol 8689. Cham: Springer; 2014. https://doi.org/10.1007/978-3-319-10590-1_53.

20. Krizhevsky A, Sutskever I, Hinton GE; ImageNet classification with deep convolutional neural networks. Advances in Neural Information Processing Systems 25: 26th Annual Conference on Neural Information Processing Systems 2012. 2012 Dec. 3-6; Lake Tahoe (NV), USA: 2012. 25:1097-105.

21. Szegedy C, Liu W, Jia Y, Sermanet P, Reed S, Anguelov D, et al. Going deeper with convolutions. Proceedings of the IEEE International Conference on Computer Vision and Pattern Recognition. 2015 Jun 6-12; Boston (MA), USA: 2015. 1-9.

22. Kim MK. Feature extraction on a periocular region and person authentication using a ResNet model. J Korea Multimed Soc 2019;22:1347-55.

23. Cho JM, Moon KT, Yoo TK. Robotic simple prostatectomy: why and how? Int Neurourol J 2020;24:12-20. PMID: 32252182

Table 1.

Performance evaluation results of cross-validation

Table 2.

Holmium laser device structure